The world is fundamentally characterized by an underlying flex or slop – a kind of slack or ‘play’ that allows some bits to move about or adjust without much influencing, and without being much influenced by, other bits. Thus we can play jazz in Helsinki, as loud as we please, without troubling the Trappists in Montana. Moths can fly into the night with only a minimal expenditure of energy, because they have to rearrange only a tiny fraction of the world’s mass. An idea can erupt in Los Angeles, turn into a project, capture the fancy of hundreds of people, and later subside, never to be heard of again, all without having any impact whatsoever on the goings-on in New York.

Brian Cantwell Smith, On The Origin of Objects, p. 199

I’m a week into my new job, and the main thing that I’ve learned about space is that it’s full of acronyms. The work looks interesting though. I’m going to be working on mission planning software, currently for Meteosat weather satellites but potentially for a bunch of other satellites as well. This is basically a big scheduler, something like Gantt charts for project managers but with all the bars on the chart being stuff like manouevres and uplink windows. I’m being encouraged to learn as much about the surrounding context as I can, which I really appreciate.

I don’t know how much time I’m going to get to write or think in the next couple of months, as I’ll be too busy cramming acronyms into my head. So the next one of these might be short, I don’t know. But this month I ended up writing a lot (helped by an unexpected week off as I started my job a bit later than originally planned). I’ve been reading Brian Cantwell Smith’s On the Origin of Objects. This is a difficult book with a lot more metaphysics that I realised I was signing up for, and I still don’t understand the overall story he’s telling. But some of it has really helped me start to understand some things that were confusing me before, especially his concept of ‘the middle distance’, which I’ll get into below.

I’m not going to try and summarise what this book is about, but there’s a brief description by David Chapman here if you want a bit more context. Instead I’m going to give some rambling background on how I got interested in this, and then hopefully start to explain what this ‘middle distance’ thing is about.

Cognitive decoupling, banana phones, rabbits, and the St. Louis Arch

So I originally got into this by thinking about that ‘cognitive decoupling idea again. I’ve been going back to it on and off, and spent a while reading some background material. As Sarah Constantin says in her original blog post, this term comes from Stanovich, so I wanted to trace what his influences were. The best review I found was in his paper Defining features versus incidental correlates of Type 1 and Type 2 processing, which refers back to an older paper, Pretense and Representation by Leslie:

In a much-cited article, Leslie (1987) modeled pretense by positing a so-called secondary representation (see Perner 1991) that was a copy of the primary representation but that was decoupled from the world so that it could be manipulated—that is, be a mechanism for simulation.

I read the Leslie paper, and was surprised by how literal it was. (Apparently 80s cognitive science was like that. This section grew out of that linked Twitter thread and a string of emails with David Chapman, so I’ll refer to those a bit.) Leslie is interested in how pretending works – how a small child pretends that a banana is a telephone, to take one of his examples. And the mechanism he posits is… copy-and-paste, but for the brain:

As in, we get some kind of perceptual input which causes us to store a ‘representation’ that means ‘this is a banana’. Then we make a copy of this. Now we can operate on the copy (‘this banana is a telephone’) without also messing up the banana representation. They’ve become decoupled.

What are these ‘representations’? Leslie just has this to say:

What I mean by representation will, I hope, become clear as the discussion progresses. It has much in common with the concepts developed by the information-processing, or cognitivist, approach to cognition and perception…

This is followed by a long string of references to Chomsky, Dennett, etc. From Leslie’s use of the term in the paper it appears that his ‘representations’ can be put into a rough correspondence with English propositions about bananas, telephones, and cups of tea, and that we then use them as a kind of raw material to run inference rules on and come to new conclusions:

This is unsatisfying because it completely ignores the question of how all these representations mean anything in the real world – how do we know that the string of characters ‘cups contain water’, or its postulated mental equivalent, has anything to do with actual cups and actual water? These representations are just sitting there ‘in the head’, too causally disconnected from the world to be a useful explanation of anything.

(This is mostly off topic for the point I’m trying to make today, but I David Chapman pointed out another big problem with Stanovich using this Leslie paper as a model to build on. Stanovich is interested in what makes actions or behaviours rational, and he wants cognitive decoupling to be at least a partial explanation of this. Leslie is looking at toddlers pretending that bananas are telephones. If even very young children are passing this test for ‘rationality’, it’s not going to be much use for discriminating between ‘rational’ and ‘irrational’ behaviour in adults. So Stanovich would need a narrower definition of ‘decoupling’ that excludes the banana-telephone example if he wants to use it as a rationality criterion.)

Now, when I first started thinking about this, I also ended up taking a rather unhelpful path, but in completely the opposite direction. Too causally coupled rather than not causally coupled enough. I just nicked the phrase ‘cognitive decoupling elite’ from Sarah Constantin’s post without doing any background reading at all, and I managed to hallucinate a completely different intellectual history for the term. ‘Decoupling’ sounds very physicsy to me, bringing up associations of actual interaction forces and coupling constants, and I’d been reading Dreyfus’s Why Heideggerian AI Failed, which discusses dynamical-systems-inspired models of cognition:

Fortunately, there is at least one model of how the brain could provide the causal basis for the intentional arc. Walter Freeman, a founding figure in neuroscience and the first to take seriously the idea of the brain as a nonlinear dynamical system, has worked out an account of how the brain of an active animal can find and augment significance in its world. On the basis of years of work on olfaction, vision, touch, and hearing in alert and moving rabbits, Freeman proposes a model of rabbit learning based on the coupling of the brain and the environment.

In this case, the rabbit and environment are literally, physically coupled together: a carrot smell out in the world pulls its olfactory bulb into a different state, which itself pulls the rabbit into a different behaviour state, with a causal coupling so direct that referring to it as a ‘representation’ seems like overkill:

Freeman argues that each new attractor does not represent, say, a carrot, or the smell of carrot, or even what to do with a carrot. Rather, the brain’s current state is the result of the sum of the animal’s past experiences with carrots, and this state is directly coupled with or resonates to the affordance offered by the current carrot.

This seems much more promising than the bananaphone story for explaining why situations intrinsically mean something about the world: the world is directly reaching in and shoving the rabbit around! But the very directness of the causal coupling is also a weak point… after all, we want to talk about decoupling. The idea behind ‘cognitive decoupling’ is to be able to pull away from the world long enough to consider things in the abstract, without all the associations that normally get dragged along for free.

At some point I was googling a bunch of keywords like ‘dynamical systems’ and ‘decoupling’ in the hope of fishing up something interesting, and I came across a review by Rick Grush of Mind as Motion: Explorations in the Dynamics of Cognition by Port and van Gelder, which had a memorable description of the problem:

…many paradigmatically cognitive capacities seem to have nothing at all to do with being in a tightly coupled relationship with the environment. I can think about the St. Louis Arch while I’m sitting in a hot tub in southern California or while flying over the Atlantic Ocean.

He argues (in the context of mental imagery) that there needs to be a ‘decoupling’ process as well:

…what is needed, in slightly more refined terms, is an executive part, C (for Controller), of an agent, A, which is in an environment E, decoupling from E, and coupling instead to some other system E’ that stands in for E, in order for the agent to ‘think about’ E (see Figure 2). Cognitive agents are exactly those which can selectively couple to either the ‘real’ environment, or to an environment model, or emulator, perhaps internally supported, in order to reason about what would happen if certain actions were undertaken with the real environment.

This actually sounds like a plausible alternate history for Stanovich’s decoupling idea, with its intellectual roots in dynamical systems rather than the representational theory of mind. So maybe my hallucinations were not too silly after all.

David Chapman pointed out that this idea of a ‘middle distance’ between direct causal coupling and complete irrelevance was a key part of Brian Cantwell Smith’s argument in On the Origin of Objects. I was already vaguely aware of this from a helpful exchange somewhere in the bowels of a Meaningness comments section (the whole thread is worth reading, but I’m thinking about the bit starting here) and had been meaning to read the book for a while. It’s been well worth doing – he has some great examples which have really helped me get clearer on this.

So this whole section was a very circuitous path to get to Cantwell Smith’s ideas, which are what I really want to talk about…

The middle distance

This idea of ‘the middle distance’ is only part of the book. Cantwell Smith is making a very complex, densely interconnected argument that that I don’t understand at all well at the moment. I haven’t even read all of it yet. I’m just going to talk about some of the bits I do understand. After getting rather lost in the metaphysics of the earlier chapters, I had a lot of success picking up the thread in Chapter 6, ‘Flex and Slop’, which has three very illuminating examples, which I’ll go through in turn.

First, super-sunflowers:

… imagine that a species of “super-sunflower” develops in California to grow in the presence of large redwoods. Suppose that ordinary sunflowers move heliotropically, as the myth would have it, but that they stop or even droop when the sun goes behind a tree. Once the sun re-emerges, they can once again be effectively driven by the direction of the incident rays, lifting up their faces, and reorienting to the new position. But this takes time. Super-sunflowers perform the following trick: even when the sun disappears, they continue to rotate at approximately the requisite ¼° per minute, so that the super-sunflowers are more nearly oriented to the light when the sun appears.

A normal sunflower is directly causally coupled to the movement of the sun. This is analogous to simple feedback systems like, for example, the bimetallic strip in a thermostat, which curls when the strip is heated and one side expands more than the other. In some weak sense, the curve of the bimetallic strip ‘represents’ the change in temperature. But, as with the rabbit’s olfactory bulb, the coupling is so direct that calling it ‘representation’ is dragging in more intentional language that we need. It’s really just a load of physics.

The super-sunflower brings in a new ingredient: it carries on attempting to track the sun even when they’re out of causal contact. Cantwell Smith argues that this disconnected tracking is the (sunflower) seed that genuine intentionality grows from. We are now on the way to something that can really be said to ‘represent’ the movement of the sun:

This behaviour, which I will call “non-effective tracking”, is no less than the forerunner of semantics: a very simple form of effect-transcending coordination in some way essential to the overall existence or well-being of the constituted system.

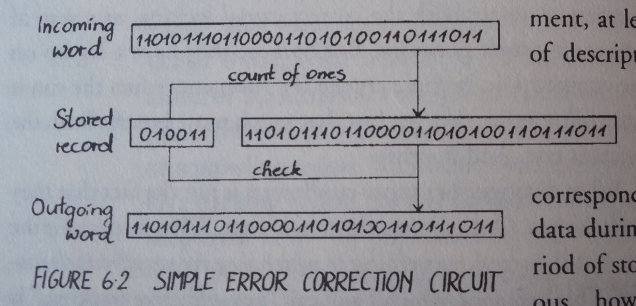

Now for a second, more realistic example. Consider the following error-checking system:

There’s a 32 bit word that we want to send, but we want to be sure that it’s been transmitted correctly. So we also send a 6-bit ‘check code’ containing the number of ones (19 in this instance, or 010011 in binary). If these don’t match, we know something’s gone wrong.

Obviously, we want the 6-bit code to stay coordinated with the 32-bit word for the whole storage period, and not just randomly change to some other count of ones, or it’s useless. Less obviously (“because it is such a basic assumption underlying the whole situation that we do not tend to think about it explicitly”), we don’t want the 6-bit code to invariably be correlated to the 32-bit word, so that a change in the word always changes the code. Otherwise we couldn’t do error checking at all! If a cosmic ray flips one of the bits in the word, we want the code to remain intact, so we can use it to detect the error. So again we have this ‘middle distance’ between direct coupling and irrelevance.

One final real-world example: file caches. We want the data stored in the cache to be similar to the real data, or it’s not going to be much of a cache. At the same time, though, if we make everything exactly the same as the original data store, it’s going to take exactly as long to access the cache as it is to access the original data, so that it’s no longer really a cache.

In all these examples, it’s important that the ‘representing’ system tries to stay coordinated with the distant ‘represented’ system while they’re out of direct contact. The super-sunflower keeps turning, the check code maintains its count of ones, the file cache maintains the data that was previously written to it:

In all these situations, what starts out as effectively coupled is gradually pulled apart, but separated in such a way as to honor a non-effective long-distance coordination condition, leading eventually to effective reconnection or reconciliation.

This is where the ‘flex and slop’ (quoted at the start of this newsletter) comes in. The world needs some level of underlying slop for this to be possible – things can get causally disconnected enough to rearrange the representation independently of the thing being represented. (This is what makes computation ‘cheap’ – we can rearrange some bits without having to also rearrange some big object elsewhere that they are supposed to represent some aspect of.) To make the point, Cantwell Smith compares this with two imaginary worlds where this sort of ‘middle distance’ representation couldn’t get started. The first world consists of nothing but a huge assemblage of interlocking gears that turn together exactly without slipping, all at the same time. In this world, there is no slop at all, so nothing can ever get out of causal contact with anything else. You could maybe say that one cog ‘represents’ another cog, but really everything is just like the thermostat, too directly coupled to count interestingly as a representation. The second world is just a bunch of particles drifting in the void without interaction. This has gone beyond slop into complete irrelevance. Nothing is connected enough to have any kind of structural similarity to another thing.

The three examples given above – file caches, error checking and the super-sunflower – are too simple to have anything much like genuine ‘intentional content’. The tracking behaviour of the representing object is too simple – the super-sunflower just moves across the sky, and the file cache and check code just sit there unchanged. Cantwell Smith acknowledges this, and says that the exchange between ‘representer’ and ‘represented’ has to have a lot more structure, with alternating patterns of being in and out of causal contact, and some other stuff that somehow helps to individuate the two as separate objects. (This is the point where I get lost in some complicated argument about ‘patterns of cross-cutting extension’, which I haven’t managed to disentangle yet.)

From my reading so far, I don’t think he offers up any concrete examples that demonstrate this more complicated behaviour. So I don’t have much of a feeling for how well this works. I’m still convinced that this ‘middle distance’ idea is an important insight – it’s exactly the concept I was missing when I was reading all this stuff about cognitive decoupling. But I don’t yet have a good feeling for how far it can be pushed. I’m going to need to think about this a lot more.

Bumping and shoving

As a final aside, you might notice that none of this discussion about how computers mean things gets into Turing machines or the lambda calculus or any of the rest of the standard ‘theory of computation’. Cantwell Smith would say that that’s because the standard theory just doesn’t get anywhere near these questions of meaning and intention. Instead, it’s a theory of causal effectiveness only – how a machine physically does stuff, basically. It has nothing to say about how the resulting stuff it does has anything to do with any other distant stuff in the world. That’s the bit that he is trying to work on. (There’s a good lecture of his on YouTube that’s specifically about this – I learned of it from Gary Basin on Twitter.)

Cantwell Smith has a great phrase for direct causal effectiveness – he calls it all ‘bumping and shoving’. (He has a real gift for memorable turns of phrase, which saves the more technical exposition from getting unreadably dry.) So the theory of computation is a theory of bumping and shoving, in his terms.

I enjoyed this because something similar has been bothering me for ages in the context of physics, where ‘information’ is used in a similar way. I’ve been throwing that word around a lot myself in this newsletter while talking about Shannon entropy and its variants, but it’s a very desiccated use of the word, drained of all its intentional content. And physicists often don’t recognise this when they start philosophising, and throw the word around as though they want it to comprehend this much wider meaning. This has always annoyed me, but I haven’t had the resources to really articulate my annoyance, and I’m finally starting to change that.

Next month

I will be learning a million acronyms. I probably won’t be doing much physics – instead I’d like to direct whatever leftover energy I have towards writing up blog posts, which seems easier to do in parallel with the early stages of getting used to the new job. I want to finally get out a follow up post to that decoupling one, with some of my bananaphone ramblings from this newsletter, and some other notes I’ve built up. After that I’d like to write up my understanding of flex and slop and the middle distance, and also have a second run at that shitpost-to-scholarship idea (thanks for the comments). That’s going to keep me occupied for a while!

Cheers,

Lucy