At the end of my last post, I talked about Brian Cantwell Smith’s idea of ‘the middle distance’ – an intermediate space between complete causal disconnectedness and rigid causal coupling. I was already vaguely aware of this idea from a helpful exchange somewhere in the bowels of a Meaningness comments section but hadn’t quite grasped its importance (the whole thread is worth reading, but I’m thinking about the bit starting here). Then I blundered into my own clumsy restatement of the idea while thinking about cognitive decoupling, and finally saw the point. So I started reading On the Origin of Objects.

It’s a difficult book, with a lot more metaphysics than I realised I was signing up for, and this ‘middle distance’ idea is only a small part of a very complex, densely interconnected argument that that I don’t understand at all well and am not even going to attempt to explain. But the examples Smith uses to illustrate the idea are very accessible without the rest of the machinery of the book, and helpful on their own.

I was also surprised by how little I could find online – searching for e.g. “brian cantwell smith” “middle distance” turns up lots of direct references to On The Origin of Objects, and a couple of reviews, but not much in the way of secondary commentary explaining the term. You pretty much have to just go and read the whole book. So I thought it was worth making a post that just extracted these three examples out.

Example 1: Super-sunflowers

Smith’s first example is fanciful but intended to quickly give the flavour of the idea:

… imagine that a species of “super-sunflower” develops in California to grow in the presence of large redwoods. Suppose that ordinary sunflowers move heliotropically, as the myth would have it, but that they stop or even droop when the sun goes behind a tree. Once the sun re-emerges, they can once again be effectively driven by the direction of the incident rays, lifting up their faces, and reorienting to the new position. But this takes time. Super-sunflowers perform the following trick: even when the sun disappears, they continue to rotate at approximately the requisite ¼° per minute, so that the super-sunflowers are more nearly oriented to the light when the sun appears.

A normal sunflower is directly coupled to the movement of the sun. This is analogous to simple feedback systems like, for example, the bimetallic strip in a thermostat, which curls when the strip is heated and one side expands more than the other. In some weak sense, the curve of the bimetallic strip ‘represents’ the change in temperature. But the coupling is so direct that calling it ‘representation’ is dragging in more intentional language that we need. It’s just a load of physics.

The super-sunflower brings in a new ingredient: it carries on attempting to track the sun even when they’re out of direct causal contact. Smith argues that this disconnected tracking is the (sunflower) seed that genuine intentionality grows from. We are now on the way to something that can really be said to ‘represent’ the movement of the sun:

This behaviour, which I will call “non-effective tracking”, is no less than the forerunner of semantics: a very simple form of effect-transcending coordination in some way essential to the overall existence or well-being of the constituted system.

Example 2: Error checking

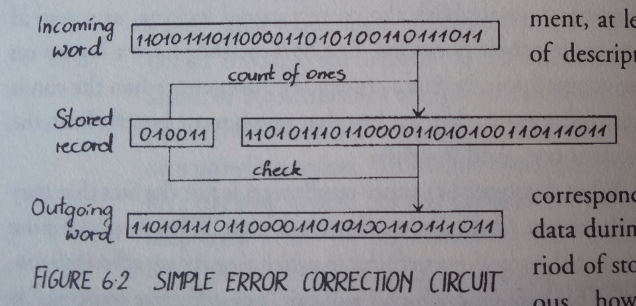

Now for a more realistic example. Consider the following simple error-checking system:

There’s a 32 bit word that we want to send, but we want to be sure that it’s been transmitted correctly. So we also send a 6-bit ‘check code’ containing the number of ones (19 of them in this instance, or 010011 in binary). If these don’t match, we know something’s gone wrong.

Obviously, we want the 6-bit code to stay coordinated with the 32-bit word for the whole storage period, and not just randomly change to some other count of ones, or it’s useless. Less obviously (“because it is such a basic assumption underlying the whole situation that we do not tend to think about it explicitly”), we don’t want the 6-bit code to invariably be correlated to the 32-bit word, so that a change in the word always changes the code. Otherwise we couldn’t do error checking at all! If a cosmic ray flips one of the bits in the word, we want the code to remain intact, so we can use it to detect the error. So again we have this ‘middle distance’ between direct coupling and irrelevance.

Example 3: File caches

One final real-world example: file caches. We want the data stored in the cache to be similar to the real data, or it’s not going to be much of a cache. At the same time, though, if we make everything exactly the same as the original data store, it’s going to take exactly as long to access the cache as it is to access the original data, so that it’s no longer really a cache.

Flex and slop

In all these examples, it’s important that the ‘representing’ system tries to stay coordinated with the distant ‘represented’ system while they’re out of direct contact. The super-sunflower keeps turning, the check code maintains its count of ones, the file cache maintains the data that was previously written to it:

In all these situations, what starts out as effectively coupled is gradually pulled apart, but separated in such a way as to honor a non-effective long-distance coordination condition, leading eventually to effective reconnection or reconciliation.

For this to be possible, the world needs to be able to support the right level of separation:

The world is fundamentally characterized by an underlying flex or slop – a kind of slack or ‘play’ that allows some bits to move about or adjust without much influencing, and without being much influenced by, other bits. Thus we can play jazz in Helsinki, as loud as we please, without troubling the Trappists in Montana. Moths can fly into the night with only a minimal expenditure of energy, because they have to rearrange only a tiny fraction of the world’s mass. An idea can erupt in Los Angeles, turn into a project, capture the fancy of hundreds of people, and later subside, never to be heard of again, all without having any impact whatsoever on the goings-on in New York.

This slop makes causal disconnection possible – ‘subjects’ can rearrange the representation independently of the ‘objects’ being represented. (This is what makes computation ‘cheap’ – we can rearrange some bits without having to also rearrange some big object elsewhere that they are supposed to represent some aspect of.) To make the point, Smith compares this with two imaginary worlds where this sort of ‘middle distance’ representation couldn’t get started. The first world consists of nothing but a huge assemblage of interlocking gears that turn together exactly without slipping, all at the same time. In this world, there is no slop at all, so nothing can ever get out of causal contact with anything else. You could maybe say that one cog ‘represents’ another cog, but really everything is just like the thermostat, too directly coupled to count interestingly as a representation. The second world is just a bunch of particles drifting in the void without interaction. This has gone beyond slop into complete irrelevance. Nothing is connected enough to have any kind of structural relation to anything else.

The three examples given above – file caches, error checking and the super-sunflower – are really only one step up from the thermostat, too simple to have anything much like genuine ‘intentional content’. The tracking behaviour of the representing object is too simple – the super-sunflower just moves across the sky, and the file cache and check code just sit there unchanged. Smith acknowledges this, and says that the exchange between ‘representer’ and ‘represented’ has to have a lot more structure, with alternating patterns of being in and out of causal contact, and some other ‘stabilisation’ patterns that I don’t really understand, that somehow help to individuate the two as separate objects. At this point, the concrete examples run completely dry, and I get lost in some complicated argument about ‘patterns of cross-cutting extension’ which I haven’t managed to disentangle yet. The basic idea illustrated by the three examples was new to me, though, and worth having on its own.

Brian Cantwell Smith is always very good but “on the origin of objects” was very difficult for me to get anything actionable out of.

Furthermore reference and grounding is something I don’t understand.

I would like to be able to treat intentions as something solid and well defined but I’ve been learning lately that people don’t have to decide what they mean or what speech act they are performing before they say something. Often the speech act is decided a good while later. One example from a book on pragmatics was a customer at a cafe (in the 80s) asked “Is this the non-smoking section?” and the waitress constructed it into a request by answering “It can be non-smoking”.

Since intentions are ambiguous I’d like to be able to instead deal with the results and say that they effects of an an action are what it means but “On the tigers in India” makes the point that people can talk about things that they will never experience, and let their words be grounded by other people in distant places and times.

Objects obviously exist so I want to know what makes an object. What I’d like it to be is a predictable piece of experience. Something that acts in certain ways in response to actions. (affordances can be a simple record of past experience with it)

Watching my niece spill bubble solution when she bent over was interesting because it meant that she had to remember its presence when she wasn’t looking. But it also suggests that objects don’t have to be simulated but only need to exist while your attention is on them.

Similar objects are similar because the experiences are similar and I think you can get useful generalizations by extracting out similarities in appearance and similarities in response to actions.

But this scheme seems to fail utterly to handle cognitive decoupling

LikeLiked by 1 person

> Brian Cantwell Smith is always very good but “on the origin of objects” was very difficult for me to get anything actionable out of.

Yeah, know the feeling… I like the writing style, and the ‘middle distance’ thing definitely strikes me as important, but I haven’t managed to extract much else yet. One difficulty I’m having is that the argument is one big lump distributed across the whole book. There’s no summary where you can get the general shape of what he’s saying first, you just have to plough through the whole thing.

Anything else by BCS you’d recommend?

> “On the tigers in India” makes the point that people can talk about things that they will never experience, and let their words be grounded by other people in distant places and times.

Sounds like I should read this! This whole thing about grounding in other people’s experience is something I still don’t much of a feeling for, think it’s one of the pieces I’m still missing in understanding David Chapman’s stuff. (I’m thinking of this comment, for example, particularly that last bit. “Or, to radicalize the claim, there is no original intentionality. All meaning is derived from interpretation, *potentially by someone else*.”

I need to read some ethnomethodology. Keep saying this and not actually doing it.

LikeLike

I would recommend literally everything written by Brian Cantwell Smith. He tends not to repeat himself so you can just read straight through. I just really like the way he builds his arguments.

So it turns out there is no essay named “On the tigers in India” However the author is Hillary Putnam and the term he uses is “division of linguistic labor” He doesn’t go into enough detail on exactly HOW other people make words mean something but he gives the example that he knows nothing that would distinguish beech trees from elm trees but the names, but when he says something about elm trees it still is about elm trees because someone else knows what an elm tree is. (he’s all about sentences instead of utterances which I think is a mistake but oh well)

If you want to know how people collectively give meanings to utterances the best example I have is what I noticed while playing with my niece as she was learning to speak. The primary thing she used language for was to fulfill her role in a ritualized collective action. For instance when she gave somebody something she’d say “Here you go” because that is what people say when they are doing that thing together (and then she’d pick it up and go “here you go” again because she enjoys doing things together). Many of her utterances weren’t meant to communicate information for you to think about but were distinctive markers of what collective action we were doing.

I like this view because it requires an agent to do much less processing to figure out what to do, and harmonizes well with “speech acts” and scripts as concepts.

Obviously sometimes people really are trying to bring your attention to something so you can think about it.

It is difficult for me to get a handle on because meanings are partially in your head and partially not, and how much of each it is is different for each thing.

I’m using linguistic meanings as a metaphor for the meaning of actions because I know more about linguistics than actionology.

I haven’t read enough ethnomethodology either and so if you find anything that is free online I’d love to read it.

LikeLiked by 1 person

OK, thanks, I’ve found Putnam’s *Meaning and Reference*, which has the elm example (but not the tiger one). I’ll give it a read!

Re ethnomethodology, I started on Suchman’s *Plans and Situated Actions*, which is pretty easy to find online, but stopped for some reason. I should get back to it. That’s all I’ve read so far.

LikeLike

This post and the previous one are marvelous. I agree that this idea of middle distance seems important and interesting. I might have to read “On the origin of objects” as well. Thank you for your accesible summary of those ideas!

LikeLike

Thank you! ‘There’s something interesting here’ is exactly what I wanted to get across. Don’t have a clear idea what that thing is yet!

LikeLike

Re: “Suppose that ordinary sunflowers move heliotropically, as the myth would have it”

Maybe I’m not decoupling enough, but I was distracted by this and had to know what the myth was. Apparently sunflowers track the sun before blooming, but after blooming, they face east. I guess the myth comes from not knowing the second part? Or did he mean something else?

Regarding disconnection and representation, I think there are connections to memory (knowing something happened after it stopped) and communication (inferring events at a distance). One can gather evidence of the past or of distant places through various clues that weren’t intended for that purpose, but this doesn’t imply a representation, just a causal connection. It seems like for this to become communication, there needs to be some sort of co-evolutionary effect where the transmitting process that leaves evidence is optimized to make information transmission more efficient. Maybe a representation can be considered to be optimized, evolved evidence?

LikeLike

I was also a bit confused by the ‘myth’ part, as I had always assumed that sunflowers did follow the sun. Thanks for actually bothering to look up what they really do (unlike me). I’d guess the same as you, that the ‘myth’ is not knowing the second part. Though that seems more of a minor misunderstanding than a myth.

> It seems like for this to become communication, there needs to be some sort of co-evolutionary effect where the transmitting process that leaves evidence is optimized to make information transmission more efficient. Maybe a representation can be considered to be optimized, evolved evidence?

I think I need an example or something to understand this?

LikeLike

Slensinsky

Yeah, that makes sense. Incidental clues to intention develop over time to become ostentatious symbols for communication.

Some literally co-evolved examples are the white tails on deer evolving to more clearly signal alarm. (because deer were already looking at eachothers’ tails for signs of alarm)

And the spots on tiger ears and the white scelera of humans. And all kinds of communicative displays in animals.

Moving on the metaphorically co-evolved things a word is also quite an interesting thing because it depends on the facts that not only are you thinking of a thing, you are expecting other people to try guess what you are thinking of and furthermore that they are willing to believe that you are willing to help them guess what it is. (This isn’t an easy state of mind to end up in, which is why apes don’t learn to speak (they don’t look at what you point at))

A sufficient condition for communication to develop is the belief that someone is trying to communicate with you. (Kinda like William James’s account of friendship)

I get into difficulty when I try to apply “representation” to how minds work because there doesn’t seem to be separate agents uttering and interpreting linguistic messages. And I want a story of how a mind interprets things without requiring each part of the mind to interpret things to the same degree as the whole does (progressively lesser degrees would be fine though).

Oh that reminds me. In the very beginning Brian Canwell-Smith talks about how numbers are not represented in a numerical computation. If I remember correctly he say that it is because numbers represent the computation instead, but I managed to miss his point.

If someone could re-explain what he meant there I’d feel a lot more solid in my understanding of the the whole book.

LikeLike

Hm, your examples might help. Will have to think about it some more.

Can’t help you with that bit early on about numerical computations though… didn’t understand it at all! If I do go back and manage to make some sense of it I’ll let you know.

LikeLike

I’ve moved on to thinking that communication and representation are not any more related than data compression and representation are. The reason I think that is because phonemes are a superb example of something optimized for recognition that is not representational at all.

There are some interesting similarities in how both memory and language require us to think of something that isn’t present at that moment.

LikeLike

I also have a question about a part that I don’t understand, maybe you can be more helpful than I was? This is the ‘Stabilisation’ section of Chapter 7 (p 224 in my copy). He’s been discussing s-regions that track the o-region, like the super-sunflower, but then he says this isn’t enough:

> they *compensate* for the effective separation… but they do not yet *exploit* the separation – and thus do nothing to stabilize the object as an object (or anything else, for that matter)…

> The challenge for the s-region is to exploit the separation in order to *push the relational stability out onto the world*.

This continues for another paragraph, and looks important, but is really opaque to me. (This is also the point that the examples run dry.) After this he gets on to ‘cross-cutting extension’, and I am completely lost. Do you have any idea about what ‘exploiting the separation’ means? Or any good examples?

LikeLiked by 1 person

I think he’s talking about symbolic manipulation. When you exploit the fact that your representation of an object isn’t causally tied to that object you can do things like go to where it will be later.

For instance if you see fish in a stream, you can leave, go get bait, a fishing pole and net, tell your friend to get the pan ready to fry and then head back and catch the fish. This is exploiting the fact that you can do things related to the fish that would be difficult to do if you were only responding to the immediately stimulus.

and by “push the relational stability on to the world” he means you aren’t doing a stimulus response routine to the image of a fish you are responding to the fact that there is a fish there. The coherency of your whole action demands that fish are a real thing. The fish is an object that you carved out of the continuous stuff of the world, and now that it is an object you don’t have to treat it as a mere sensory impression anymore.

LikeLiked by 1 person

> by “push the relational stability on to the world” he means you aren’t doing a stimulus response routine to the image of a fish you are responding to the fact that there is a fish there

Obviously I’ll have to think about this some more but this bit is already very helpful, thanks!

LikeLiked by 1 person

Since you asked on twitter, I’ll try to reply here. The challenge is to write less than a book.

Anders’ fish example seems right to me.

By “cross-cutting,” BCS (confusingly) means “temporally extended,” afaict. For something to be an object, it has to keep being the *same* object across time.

“Being an object” is, traditionally, tied up with “identity conditions,” in other words. This is somewhat (but not altogether) mistaken inasmuch as there are never fully definite identity conditions. You can’t navigate the same river twice in the ship of Theseus, and so on.

This is (maybe not coincidentally) the same issue as the numerical computation one. That’s not specific to numbers; he’s using it as an example to say “if we can’t sort this out for numbers, which are the most definite and formal thing that exist, we can’t hope to do it for eggplants.”

So, in math (the territory, not the computational map) there’s the integer zero and the real zero. Same or different? This is a question mathematicians (and physicists) would never ask. Computer guys do because it’s a problem that actually has to be addressed (in the map).

Actually, what *is* the real zero? Maybe a Dedekind cut or Cantor sequence. Is Dedekind zero the same object as Cantor zero?

My one-time officemate David McAllester has a really interesting alternative foundation-of-mathematics framework in which this question can actually be posed, which you can’t in ZFC. He has a rigorous definition of “isoonticity” according to which Dedekind zero and Cantor zero are isoontic. His notion of “onticity” derives partly from Heidegger… but I digress.

In computers we have ints and floats. Is int 0 the same object as float 0.0? How about int16 0 vs int32 0? The answer is, it depends on how you look at it (and the specifics of the programming language). Many programming languages have multiple equality operators that make coarser and finer distinctions. In LISP, 0 and 0.0 are = but not EQ.

Now it’s tempting to say that the ULTIMATE REALITY is the underlying bit pattern, and the programming language’s equality operators are conveniences and NOT REAL. int16 0 is one thing and int32 0 is a different thing, and we can look inside a binary dump of the runtime state and see which is which.

The problem is that the same float 0.0 bit pattern may appear in memory in two places. Are those the same object or different?

In some LISP implementations, (EQ 0.0 0.0) is false. Floats are “boxed,” i.e. full-fledged objects, and the first 0.0 and the second one are created at different memory locations, so EQ (pointer equality) sees them as different objects.

OK, so maybe the ULTIMATE REALITY is the contents of a memory address. That’s the finest distinction LISP can make.

But, the “same” memory address may be in several places at once, due to memory hierarchy. The programming language can’t see this, but the operating system’s memory management code has to keep track of the fact that a logical memory address is both in RAM and on the disk (if it’s paged in). The operating system can’t see this, but the cache control logic has to keep track of the fact that an address in RAM is also in the cache. Is the 0.0 in the cache the same object as the one in RAM at the same address? Yes for the cache logic, no for the OS. Is the 0.0 in RAM the same object as the one on the disk at the same address? Yes for the programming language, no for the OS.

The aim of 3-LISP was to be enable a program to introspect on its own runtime. (This sort of thing has been a standard feature of programming languages for about 25 years now, but no one had done anything like it before BCS’ 1984 PhD thesis.) In order to do that, it had to represent the state of its runtime. In order to do that, it had to be able to talk about things like how datastructures work. So the idea of 2-LISP was to get a clear framework for doing that first, and then 3-LISP was built as an extension to 2-LISP.

The problem BCS points out on p.40 is keeping track of all these distinctions is nightmarishly complicated and almost always useless. As a programmer, you virtually always want one 0.0 to equal another 0.0. Usually you also want 0.0 to equal 0, although there are exceptions. You certainly don’t want to have to care about whether your 0.0 is paged in or not. (VERY old programmers remember having to explicitly manage this in our code. We also complain about having to get up from the terminal every half hour to shovel more coal into the boiler to keep the ALU’s steam pressure up.)

What you really want is to be able to make the distinctions when you need to, and not think about them when you don’t. Automatic type coercion tries to do this; in most languages nowadays you can write 1 + 1.0 and the “right” thing will happen. Famously, though, what’s “right” actually depends on what you are trying to do, in ways the language can’t capture. A common source of bugs is assuming the “right” coercion will occur when that’s not what the language does. Keeping track of coercion rules can be an annoying headache.

[Insert standard Javascript jokes here.]

LikeLiked by 2 people

see, that’s what confuses me.

The map represents the territory right?

and the territory in this case is numbers,

and the map is the physical states of the computer.

so shouldn’t the computation represent the numbers?

LikeLike

Hmm, not sure what you are asking here. Yes, presumably, and I’m pretty sure BCS doesn’t say otherwise. The question is what this “represents” thing is. And the fact that we can’t pin down what numbers are, or what computation is, or even whether 0.0=0.0, makes this tricky. Ultimately, impossible, if we insist on an entirely definite definition.

LikeLiked by 1 person

You’re right, I re-read the chapter on computation and I think I must have garbled the quick description of 2-lisp into something completely different.

LikeLike

AHA! I found it.

It is on page 24-25 of the introduction to “Age of Significance”

“I argue that the view universally taken to follow from it—that computing should be analysed as structurally parallel to logic—is a simple but profound mistake. That is: the idea that, for purposes of developing a proper theory of computing, the marks on Turing machine tapes should be interpreted as representations or encodings of numbers, I claim to be false.

In its place, I defend the following thesis: that the true “encoding” relation defined over Turing machine tapes runs the other way around, from numbers to marks. Rather than its being the case, as is universally assumed, that configurations of marks encode numbers, that is, what is actually going on is that numbers and functions defined over them serve as models of configurations of marks, and of transformations among them.”

LikeLiked by 1 person

I was wondering if it came from there! I remember a beautifully phrased but baffling passage on the lines of ‘Dijkstra had it the wrong way round; the numbers are the telescope, and the computers are the stars’ (can’t be bothered to look up the original rn but it’s close to this).

I think I’m going to just take this numerical computation stuff as an example of wanting to pick out different things as ‘the object’ in different contexts, as David explains in his comment, and not bang my head too hard against understanding the finer points.

LikeLike

The sunflower example has some resonances with “The Semantics of Clocks”, a BCS paper from 1988 http://sci-hub.tw/10.1007/978-94-009-2699-8_1 . The idea (as I recall) is that clocks are a good model for representation because they participate in the same phenomenon they are about (time). The clocks go round in such a way as to track world-time despite being (sort of) causally disconnected, like the super-sunflowers.

LikeLiked by 1 person

Thanks, this looks promising! Only read a bit of it so far, but he’s talking about the same sort of idea:

> Everything has what I am calling a first factor; that’s what gives a system the ability to participate. The second factor of representation or content, which enables a system (a thinker, a clock) to stand in relation to what isn’t immediately accessible or discriminable, is a subsequent, more sophisticated capacity.

LikeLike

Hmm, typically the programming language specifies an abstraction and the compiler + runtime are free to implement it in any way that’s faithful to the abstraction. The abstraction is, essentially, the user interface, and the abstraction’s job isn’t to represent the details of the lower levels. It’s hiding them. Representing the underlying details is what a debugger does. There are multiple possible representations (such as source-level versus machine-level debuggers). You might also record other data about the implementation (such as cache usage or performance metrics) that the abstraction doesn’t cover at all.

At a lower level, the instruction set is an abstraction implemented by a CPU using a whole lot of transistors, which can vary significantly between machines. Generally, you don’t learn about how a CPU works internally by looking at the instruction set (though there may be clues).

Maybe it’s the opposite way? But if we thought of the machine as “representing” the user interface, that doesn’t seem right either. the relationship is not like how a map represents a territory, because the implementation is more complicated and harder to understand. It’s important for the machine to *implement* the abstraction, but this typically isn’t as an aid to understanding.

I suspect there is something similar going on with human communication, where the way people think may differ in ways we can’t easily describe or even have much self-knowledge of, but it’s mapped to English (for example) as a sort of portable representation. It might also apply to the relationship between what mathematicians think and how they write. Thoughts can vary as long as you can still communicate. And the published stuff is what counts to the community.

Mathematical theories seem to work a bit differently. In math you might describe how one construct maps to another, or some kind of equivalence, but it seems like this doesn’t imply an “implemented-by” relationship? So-called foundations are often put into place after higher levels have been in use for a while. Dedekind cuts were invented later than real numbers and are also taught later to students, so it seems they cannot be more fundamental, but just another relationship, perhaps nicer in some ways?

It might also be interesting to think about the relationship between maps and architectural drawings. A map is typically thought of a describing a pre-existing territory. The architectural drawing comes first, the building comes later, although maybe there is another drawing to represent the building “as built” if it wasn’t built according to plan. Still, the representation could be the same even if the causal dependency is opposite.

LikeLike

Reblogged this on Quaerere Propter Vērum and commented:

Excellent.

LikeLike