I’m a fairly frequent Hacker News lurker, especially when I have some other important task that I’m avoiding. I normally head to the Active page (lots of comments, good for procrastination) and pick a nice long discussion thread to browse. So over time I’ve ended up with a good sense of what topics come up a lot. “The Bay Area is too expensive.” “There are too many JavaScript frameworks.” “Bootcamps: good or bad?” I have to admit that I enjoy these. There’s a comforting familiarity in reading the same internet argument over and over again.

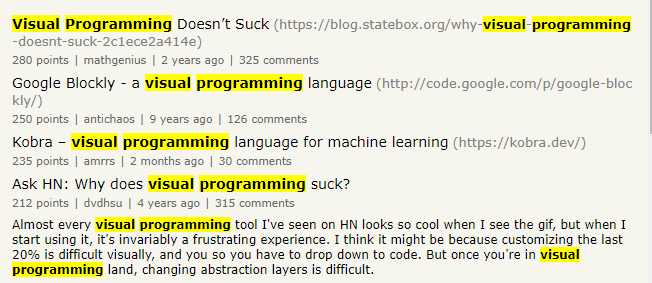

One of the more interesting recurring topics is visual programming:

Visual Programming Doesn’t Suck. Or maybe it does? These kinds of arguments usually start with a few shallow rounds of yay/boo. But then often something more interesting happens. Some of the subthreads get into more substantive points, and people with a deep knowledge of the tool in question turn up, and at this point the discussion can become genuinely useful and interesting.

This is one of the things I genuinely appreciate about Hacker News. Most fields have a problem with ‘ghost knowledge’, hard-won practical understanding that is mostly passed on verbally between practitioners and not written down anywhere public. At least in programming some chunk of it makes it into forum posts. It’s normally hidden in the depths of big threads, but that’s better than nothing.

I decided to read a bunch of these visual programming threads and extract some of this folk wisdom into a more accessible form. The background for how I got myself into this is a bit convoluted. In the last year or so I’ve got interested in the development of writing as a technology. There are two books in particular that have inspired me:

- Walter Ong’s Orality and Literacy: the Technologizing of the Word. This is about the history of writing and how it differs from speech; I wrote a sort of review here. Everything that we now consider obvious, like vowels, full stops and spaces between words, had to be invented at some point, and this book gives a high level overview of how this happened and why.

- Catarina Dutilh Novaes’s Formal Languages in Logic. The title makes it sound like a maths textbook, but Novaes is a philosopher and really it’s much closer to Ong’s book in spirit, looking at formal languages as a type of writing and exploring how they differ from ordinary written language.

Dutilh Novaes focuses on formal logic, but I’m curious about formal and technical languages more generally: how do we use the properties of text in other fields of mathematics, or in programming? What is text good at, and what is it bad at? Comment threads on visual programming turn out to be a surprisingly good place to explore this question. If something’s easy in text but difficult in a specific visual programming tool, you can guarantee that someone will turn up to complain about it. Some of these complaints are fairly superficial, but some get into some fairly deep properties of text: linearity, information density, an alphabet of discrete symbols. And conversely, enthusiasm for a particular visual feature can be a good indicator of what text is poor at.

So that’s how I found myself plugging through a text file with 1304 comments pasted into it and wondering what the hell I had got myself into.

What I did

Note: This post is looong (around 9000 words), but also very modular. I’ve broken it into lots of subsections that can be read relatively independently, so it should be fairly easy to skip around without reading the whole thing. Also, a lot of the length is from liberal use of quotes from comment threads. So hopefully it’s not quite as as bad as it looks!

This is not supposed to be some careful scientific survey. I decided what to include and how to categorise the results based on whatever rough qualitative criteria seemed reasonable to me. The basic method, such as it was, was the following:

- Type ‘visual programming’ into the HN search box and pull out the six entries on the first page that were a) about visual programming in general, not a specific tool and b) had long discussion threads (100+ comments). These six threads were:

- Ask HN: Why does visual programming suck?

- Visual Programming Doesn’t Suck, a discussion of this blog post

- Ask HN: Why isn’t visual programming a bigger thing?

- Visual Programming – Why It’s a Bad Idea, a discussion of this blog post

- Visual Programming Is Unbelievable (2015), a discussion of this blog post

- Maybe visual programming is the answer, maybe not, a discussion of this blog post

- Skim through the comments and do a rough triage, keeping anything that was on-topic and fairly substantive

- Pull out interesting-looking parts of these comments into a spreadsheet, and tag with common themes that I noticed

- Write this blog post

The basic structure of the rest of the post is the following:

- A breakdown of what commenters normally meant by ‘visual programming’ in these threads. It’s a pretty broad term, and people come in with very different understandings of it.

- Common themes. This is the main bulk of the post, where I’ve pulled out topics that came up in multiple threads.

- A short discussion-type section with some initial questions that came to mind while writing this. There are many directions I could take this in, and this post is long enough without discussing these in detail, so I’ll just wave at some of them vaguely. Probably I’ll eventually write at least one follow-up post to pick up some of these strands when I’ve thought about them more.

Types of visual programming

There are also a lot of disparate visual programming paradigms that are all classed under “visual”, I guess in the same way that both Haskell and Java are “textual”. It makes for a weird debate when one party in a conversation is thinking about patch/wire dataflow languages as the primary VPLs (e.g. QuartzComposer) and the other one is thinking about procedural block languages (e.g. Scratch) as the primary VPLs.

One difficulty with interpreting these comments is that people often start arguing about ‘visual programming’ without first specifying what type of visual programming they mean. Sometimes this gets cleared up further into a comment thread, when people start naming specific tools, and sometimes it never gets cleared up at all. There were a few broad categories that came up frequently, so I’ll start by summarising them below.

Node-based interfaces

There are a large number of visual programming tools that are roughly in the paradigm of ‘boxes with some arrows between them’, like the LabVIEW example above. I think the technical term for these is ‘node-based’, so that’s what I’ll call them. These ended up being the main topic of conversation in four of the six discussions, and mostly seemed to be the implied topic when someone was talking about ‘visual programming’ in general. Most of these tools are special-purpose ones that are mainly used in a specific domain. These domains came up repeatedly:Laboratory and industrial control. LabVIEW was the main tool discussed in this category. In fact it was probably the most commonly discussed tool of all, attracting its fair share of rants but also many defenders.

Game engines. Unreal Engine’s Blueprints was probably the second most common topic. This is a visual gameplay scripting system.

Music production. Max/MSP came up a lot as a tool for connecting and modifying audio clips.

Visual effects. Houdini, Nuke and Blender all have node-based editors for creating effects.

Data migration. SSIS was the main tool here, used for migrating and transforming Microsoft SQL Server data.

Other tools that got a few mentions include Simulink (Matlab-based environment for modelling dynamical systems), Grasshopper for Rhino3D (3D modelling), TouchDesigner (interactive art installations) and Azure Logic Apps (combining cloud services).

The only one of these I’ve used personally is SSIS, and I only have a basic level of knowledge of it.

Block-based IDEs

This category includes environments like Scratch that convert some of the syntax of normal programming into coloured blocks that can be slotted together. These are often used as educational tools for new programmers, especially when teaching children.

This was probably the second most common thing people meant by ‘visual programming’, though there was some argument about whether they should count, as they mainly reproduce the conventions of normal text-based programming:

Scratch is a snap-together UI for traditional code. Just because the programming text is embedded inside draggable blocks doesn’t make it a visual language, its a different UI for a text editor. Sure, its visual, but it doesn’t actually change the language at all in any way. It could be just as easily represented as text, the semantics are the same. Its a more beginner-friendly mouse-centric IDE basically.

— dkersten

Drag-n-drop UI builders

Drag-n-drop UI builders came up a bit, though not as much as I originally expected, and generally not naming any specific tool (Delphi did get a couple of mentions.) In particular there was very little discussion of the new crop of no-code/low-code tools, I think because most of these threads predate the current hype wave.

These tools are definitely visual, but not necessarily very programmatic — they are often intended for making one specific layout rather than a dynamic range of layouts. And the visual side of UI design tends to run into conflict with the ability to specify dynamic behaviour:

The main challenge in this particular domain is describing what is supposed to happen to the layout when the size of the window changes, or if there are dependencies among visual elements (e.g. some element only appears when a check box is checked). When laying things out visually you can only ever design one particular instance of a layout. If all your elements are static, this works just fine. But if the layout is in any way dynamic (with window resizing being the most common case) you now have to either describe what you want to have happen when things change, or have the system guess. And there are a lot of options: scaling, cropping, letterboxing, overflowing, “smart” reflow… The possibilities are endless, so describing all of that complexity in general requires a full programming language. This is one the reasons that even CSS can be very frustrating, and people often resort to Javascript to get their UI to do the Right Thing.

— lisper

These tools also have less of the discretised, structured element that is usually associated with programming — for example, node-based tools still have a discrete ‘grammar’ of allowable box and arrow states that can be composed together. UI tools are relatively continuous and unstructured, where UI elements can be resized to arbitrary pixel sizes.

Spreadsheets

There’s a good argument for spreadsheets being a visual programming paradigm, and a very successful one:

I think spreadsheets also qualify as visual programming languages, because they’re two-dimensional and grid based in a way that one-dimensional textual programming languages aren’t.

The grid enables them to use relative and absolute 2D addressing, so you can copy and paste formulae between cells, so they’re reusable and relocatable. And you can enter addresses and operands by pointing and clicking and dragging, instead of (or as well as) typing text.

Spreadsheets are definitely not the canonical example anyone has in mind when talking about ‘visual programming’, though, and discussion of spreadsheets was confined to a few subthreads.

Visual enhancements of text-based code

As a believer myself, I think the problem is that visual programming suffers the same problem known as the curse of Artificial Intelligence:

“As soon as a problem in AI is solved, it is no longer considered AI because we know how it works.” [1]

Similarly, as soon as a successful visual interactive feature (be it syntax highlighting, trace inspectors for step-by-step debugging, “intellisense” code completion…) gets adopted by IDEs and become mainstream, it is no longer considered “visual” but an integral and inevitable part of classic “textual programming”.

There were several discussions of visual tooling for understanding normal text-based programs better, through debugging traces, dependency graphs, inheritance hierarchies, etc. Again, these were mostly confined to a few subthreads rather than being a central example of ‘visual programming’.

Several people also pointed out that even text-based programming in a plain text file has a number of visual elements. Code as written by humans is not a linear string of bytes, we make use of indentation and whitespace and visually distinctive characters:

Code is always written with “indentation” and other things that demonstrate that the 2d canvas distribution of the glyphs you’re expressing actually does matter for the human element. You’re almost writing ASCII art. The ( ) and [ ] are even in there to evoke other visual types.

— nikki93

Brackets are a nice example — they curve towards the text they are enclosing, reinforcing the semantic meaning in a visual way.

Experimental or speculative interfaces

At the other end of the scale from brackets and indentation, we have completely new and experimental visual interfaces. Bret Victor’s Dynamicland and other experiments were often brought up here, along with speculations on the possibilities opened up by VR:

As long as we’re speculating: I kind of dream that maybe we’ll see programming environments that take advantage of VR.

Humans are really good at remembering spaces. (“Describe for me your childhood bedroom.” or “What did your third grade teacher look like?”)

There’s already the idea of “memory palaces” [1] suggesting you can take advantage of spatial memory for other purposes.

I wonder, what would it be like to learn or search a codebase by walking through it and looking around?

[1] https://en.wikipedia.org/wiki/Method_of_loci

— danblick

This is the most exciting category, but it’s so wide open and untested that it’s hard to say anything very specific. So, again, this was mainly discussed in tangential subthreads.

Common themes

There were many talking points that recurred again and again over the six threads. I’ve tried to collect them here.

I’ve ordered them in rough order of depth, starting with complaints about visual programming that could probably be addressed with better tooling and then moving towards more fundamental issues that engage with the specific properties of text as a medium (there’s plenty of overlap between these categories, it’s only a rough grouping). Then there’s a grab bag of interesting remarks that didn’t really fit into any category at the end.

Missing tooling

A large number of complaints in all threads were about poor tooling. As a default format, text has an enormous ecosystem of existing tools for input, search, diffing, formatting, etc etc. Most of these could presumably be replicated for any given visual format, but there are many kinds of visual formats and generally these are missing at least some of the conveniences programmers expect. I’ve discussed some of the most common ones below.

Managing complexity

This topic came up over and over again, normally in relation to node-based tools, and often linking to either this Daily WTF screenshot of LabVIEW nightmare spaghetti or the Blueprints from Hell website. Boxes and arrows can get really messy once there are a lot of boxes and a lot of arrows.

Unreal has a VPL and it is a pain to use. A simple piece of code takes up so much desktop real estate that you either have to slowly move around to see it all or have to add more monitors to your setup to see it all. You think spaghetti code is bad imagine actually having a visual representation of it you have to work with. Organization doesn’t exist you can go left, up, right, or down.

— smilesnd

The standard counterargument to this was that LabVIEW and most other node-based environments do come with tools for encapsulation: you can generally ‘box up’ sets of nodes into named function-like subdiagrams. The extreme types of spaghetti code are mostly produced by inexperienced users with a poor understanding of the modularisation options available to them, in the same way that a beginner Python programmer with no previous coding experience might write one giant script with no functions:

Somehow people form the opinion that once you start programming in a visual language that you’re suddenly forced, by some unknown force, to start throwing everything into a single diagram without realizing that they separate their text-based programs into 10s, 100s, and even 1000s of files.

Poorly modularized and architected code is just that, no matter the paradigm. And yes, there are a lot of bad LabVIEW programs out there written by people new to the language or undisciplined in their craft, but the same holds true for stuff like Python or anything else that has a low barrier to entry.

— bmitc

Viewed through this lens there’s almost an argument that visual spaghetti is a feature not a bug — at least you can directly see that you’ve created a horrible mess, without having to be much of a programming expert.

There were a few more sophisticated arguments against node-based editors that acknowledged the fact that encapsulation existed but still found the mechanics of clicking through layers of subdiagrams to be annoying or confusing.

It may be that I’m just not a visual person, but I’m currently working on a project that has a large visual component in Pentaho Data Integrator (a visual ETL tool). The top level is a pretty simple picture of six boxes in a pipeline, but as you drill down into the components the complexity just explodes, and it’s really easy to get lost. If you have a good 3-D spatial awareness it might be better, but I’ve started printing screenshots and laying them out on the floor. I’m really not a visual person though…

IDEs for text-based languages normally have features like code folding and call hierarchies for moving between levels, but these conventions are less developed in node-based tools. This may be just because these tools are more niche and have had less development time, or it may genuinely be a more difficult problem for a 2D layout — I don’t know enough about the details to tell.

Input

In general, all the dragging quickly becomes annoying. As a trained programmer, you can type faster than you can move your mouse around. You have an algorithm clear in your head, but by the time you’ve assembled it half-way on the screen, you already want to give up and go do something else.

— TeMPOraL

Text-based languages also have a highly-refined interface for writing the language — most of us have a great big rectangle sitting on our desks with a whole grid of individual keys mapping to specific characters. In comparison, a visual tool based on a different paradigm won’t have a special input device, so it will have either have to rely on the mouse (lots of tedious RSI-inducing clicking around) or involve learning a new set of special-purpose keyboard shortcuts. These shortcuts can work well for experienced programmers:

If you are a very experienced programmer, you program LabVIEW (one of the major visual languages) almost exclusively with the keyboard (QuickDrop).

Let me show you an example (gif) I press “Ctrl + space” to open QuickDrop, type “irf” (a short cut I defined myself) and Enter, and this automatically drops a code snippet that creates a data structure for an image, and reads an image file.

But it’s definitely a barrier to entry.

Formatting

If you have any desire for aesthetics, you’ll be spending lots of time moving wires around.

— prewett

Another tedious feature of many node-based tools is arranging all the boxes and arrows neatly on the screen. It’s irrelevant for the program output, but makes a big difference to readability. (Also it’s just downright annoying if the lines look wrong — my main memory of SSIS is endless tweaking to get the arrows lined up nicely).

Text-based languages are more forgiving, and also people tend to solve the problem with autoformatters. I don’t have a good understanding of why these aren’t common in node-based editors. (Maybe they actually are and people were complaining about the tools that are missing them? Or maybe the sort of formatting that is useful is just not automatable, e.g. grouping boxes by semantic meaning). It’s definitely a harder problem than formatting text, but there was some argument about exactly how hard it is to get at least a reasonable solution:

Automatic layout is hard? Yes, an optimal solution to graph layout is NP-complete, but so is register allocation, and my compiler still works (and that isn’t even its bottleneck). There’s plenty of cheap approximations that are 99% as good.

— ken

Version control and code review

Same story again — text comes with a large ecosystem of existing tools for diffing, version control and code review. It sounds like at least the more developed environments like LabVIEW have some kind of diff tool, and an experienced team can build custom tools on top of that:

We used Perforce. So a custom tool was integrated into Perforce’s visual tool such that you could right-click a changelist and submit it for code review. The changelist would be shelved, and then LabVIEW’s diff tool (lvcompare.exe) would be used to create screenshots of all the changes (actually, some custom tools may have done this in tandem with or as a replacement of the diff tool). These screenshots, with a before and after comparison, were uploaded to a code review web server (I forgot the tool used), where comments could be made on the code. You could even annotate the screenshots with little rectangles that highlighted what a comment was referring to. Once the comments were resolved, the code would be submitted and the changelist number logged with the review. This is based off of memory, so some details may be wrong.

This is important because it shows that such things can exist. So the common complaint is more about people forgetting that text-based code review tools originally didn’t exist and were built. It’s just that the visual ones need to be built and/or improved.

— bmitc

But you don’t just get nice stuff out of the box.

Debugging

Opinions were split on debugging. Visual, flow-based languages can make it easy to see exactly which route through the code is activated:

Debugging in unreal is also really cool. The “code paths” light up when activated, so it’s really easy to see exactly which branches of code are and aren’t being run – and that’s without actually using a debugger. Side note – it would be awesome if the lines of text in my IDE lit up as they were run. Also, debugging games is just incredibly fun and sometimes leads to new mechanics.

I remember this being about the only enjoyable feature of my brief time working with SSIS — boxes lit up green if everything went to plan, and red if they hit an exception. It was satisfying getting a nice run of green boxes once a bug was fixed.

On the other hand, there were problems with complexity again. Here are some complaints about LabVIEW debugging:

3) debugging is a pain. LabVIEW’s trace is lovely if you have a simple mathematical function or something, but the animation is slow and it’s not easy to check why the value at iteration 1582 is incorrect. Nor can you print anything out, so you end up putting an debugging array output on the front panel and scrolling through it.

4) debugging more than about three levels deep is painful: it’s slow and you’re constantly moving between windows as you step through, and there’s no good way to figure out why the 20th value in the leaf node’s array is wrong on the 15th iteration, and you still can’t print anything, but you can’t use an output array, either, because it’s a sub-VI and it’s going to take forever to step through 15 calls through the hierarchy.

— prewett

Use cases

There was a lot of discussion on what sort of problem domains are suited to ‘visual programming’ (which often turned out to mean node-based programming specifically, but not always).

Better for data flow than control flow

A common assertion was that node-based programming is best suited to data flow situations, where a big pile of data is tipped into some kind of pipeline that transforms it into a different form. Migration between databases would be a good example of this. On the other hand, domains with lots of branching control flow were often held to be difficult to work with. Here’s a representative quote:

Control flow is hard to describe visually. Think about how often we write conditions and loops.

That said – working with data is an area that lends itself well to visual programming. Data pipelines don’t have branching control flow and So you’ll see some really successful companies in this space.

I’m not sure how true this is? There wasn’t much discussion of why this would be the case, and it seems that LabVIEW for example has decent functionality for loops and conditions:

Aren’t conditionals and loops easier in visual languages? If you need something to iterate, you just draw a for loop around it. If you need two while loops each doing something concurrently, you just draw two parallel while loops. If you need to conditionally do something, just draw a conditional structure and put code in each condition.

One type of control structure I have not seen a good implementation of is pattern matching. But that doesn’t mean it can’t exist, and it’s also something most text-based languages don’t do anyway.

— bmitc

Looking at some examples, these don’t look too bad.

Maybe the issue is that there is a conceptual tension between data flow and control flow situations themselves, rather than just the representation of them? Data flow pipelines often involve multiple pieces of data going through the pipeline at once and getting processed concurrently, rather than sequentially. At least one comment addressed this directly:

One of the unappreciated facets of visual languages is precisely the dichotomy between easy dataflow vs easy control flow. Everyone can agree that

–> [A] –> [B] –>

——>

represents (1) a simple pipeline (function composition) and (2) a sort of local no-op, but what about more complex representations? Does parallel composition of arrows and boxes represent multiple data inputs/outputs/computations occurring concurrently, or entry/exit points and alternative choices in a sequential process? Is there a natural “split” of flowlines to represent duplication of data, or instead a natural “merge” for converging control flows after a choice? Do looping diagrams represent variable unification and inference of a fixpoint, or the simpler case of a computation recursing on itself, with control jumping back to an earlier point in the program with updated data?

— zozbot34

Overall I’d have to learn a fair bit more to understand what the problem is.

Accessible to non-programmers

Less controversially, visual tools are definitely useful for people with little programming experience, as a way to get started without navigating piles of intimidating syntax.

So the value ends up being in giving more people who are unskilled or less skilled in programming a way to express “programmatic thinking” and algorithms.

I have taught dozens of kids scratch and that’s a great application that makes programming accessible to “more” kids.

— sfifs

Inherently visual tasks

Visual programming is, unsurprisingly, well-suited to tasks that have a strong visual component. We see this on the small scale with things like colour pickers, which are far more helpful for choosing a colour than typing in an RGB code and hoping for the best. So even primarily text-based tools might throw in some visual features for tasks that are just easier that way.

Some domains, like visual effects, are so reliant on being able to see what you’re doing that visual tools are a no-brainer. See the TouchDesigner tutorial mentioned in this comment for an impressive example. If you need to do a lot of visual manipulation, giving up the advantages of text is a reasonable trade:

Why is plain text so important? Well for starters it powers version control and cut and pasting to share code, which are the basis of collaboration, and collaboration is how we’re able to construct such complex systems. So why then don’t any of the other apps use plain text if it’s so useful? Well 100% of those apps have already given up the advantages of plain text for tangential reasons, e.g., turning knobs on a synth, building a model, or editing a photo are all terrible tasks for plain text.

Niche domains

A related point was that visual tools are generally designed for niche domains, and rarely get co-opted for more general programming. A common claim was that visual tools favour concrete situations over abstract ones:

There is a huge difference between direct manipulation of concrete concepts, and graphical manipulation of abstract code. Visual programming works much better with the former than the latter.

It does seem to be the case that visual tools generally ‘stay close to the phenomena’. There’s a tension between between showing a concrete example of a particular situation, and being able to go up to a higher level of abstraction and dynamically generate many different examples. (A similar point came up in the section on drag-n-drop editors above.)

Deeper structural properties of text

“Text is the most socially useful communication technology. It works well in 1:1, 1:N, and M:N modes. It can be indexed and searched efficiently, even by hand. It can be translated. It can be produced and consumed at variable speeds. It is asynchronous. It can be compared, diffed, clustered, corrected, summarized and filtered algorithmically. It permits multiparty editing. It permits branching conversations, lurking, annotation, quoting, reviewing, summarizing, structured responses, exegesis, even fan fic. The breadth, scale and depth of ways people use text is unmatched by anything. There is no equivalent in any other communication technology for the social, communicative, cognitive and reflective complexity of a library full of books or an internet full of postings. Nothing else comes close.”

— Graydon Hoare, always bet on text, quoted by devcriollo

In this section I’ll look at properties that apply more specifically to text. Not everything in the quote above came up in discussion (and much of it is applicable to ordinary language more than to programming languages), but it does give an idea of the special position held by text.

Communicative ability

I think the reason is that text is already a highly optimized visual way to represent information. It started with cave paintings and evolved to what it is now.

“Please go to the supermarket and get two bottles of beer. If you see Joe, tell him we are having a party in my house at 6 tomorrow.”

It took me a few seconds to write that. Imagine I had to paint it.

— Changu

The communicative range of text came up a few times. I’m not convinced on this one. It’s true that ordinary language has this ability to finely articulate incredibly specific meanings, in a way that pictures can’t match. But the real reference class we want to compare to is text-based programming, not ordinary language. Programming languages have a much more restrictive set of keywords that communicate a much smaller set of ideas, mostly to do with quantity, logical implication and control flow.

In the supermarket example above, the if-then structure could be expressed in these keywords, but all the rest of the work would be being done by tokens like “bottlesOfBeer”, which are meaningless to the computer and only help the human reading it.

As soon as we’ve assigned something a variable name, we’ve already altered our code into a form to assist our cognition.

— sinker

It seems much more reasonable that this limited structure of keywords can be ported to a visual language, and in fact a node-based tool like LabVIEW seems to have most of them. Visual languages generally still have the ability to label individual items with text, so you can still have a “bottlesOfBeer” label if you want and get the communicative benefit of language. (It is true that a completely text-free language would be a pain to deal with, but nobody seems to be doing that anyway.)

Information density

A more convincing related point is that text takes up very little space. We’re already accustomed to distinguishing letters, even if they’re printed in a smallish font, and they can be packed together closely. It is true that the text-based version of the supermarket program would probably take up less space that a visual version.

This complaint came up a lot in relation to mathematical tasks, which are often built up by composing a large number of simpler operations. This can become a massive pain if the individual operations take up a lot of space:

Graphs take up much more space on the screen than text. Grab a pen and draw a computational graph of a Fourier transformation! It takes up a whole screen. As a formula, it takes up a tiny fraction of it. Our state machine used to take up about 2m x 2m on the wall behind us.

— Regic

Many node-based tools seem to have some kind of special node for typing in maths in a more conventional linear way, to get around this problem.

(Sidenote: this didn’t come up in any of the discussions, but I am curious as to how fundamental this limitation is. Part of it comes from the sheer familiarity of text. The first letters we learned as a child were printed a lot bigger! So presumably we could learn to distinguish closely packed shapes if we were familiar enough with the conventions. At this point, of course, with a small number of distinctive glyphs, it would share a lot of properties with text-based language. See the section on discrete symbols below.)

Linearity

Humans are centered around linear communication. Spoken language is essentially linear, with good use of a stack of concepts. This story-telling mode maps better on a linear, textual representation than on a graphical representation. When provided with a graph, it is difficult to find the start and end. Humans think in graphs, but communicate linearly.

— edejong

The linearity of text is a feature that is mostly preserved in programming. We don’t literally read one giant 1D line of symbols, of course. It’s broken into lines and there are special structures for loops. But the general movement is vertically downwards. “1.5 dimensions” is a nice description:

When you write text-based code, you are also restricted to 2 dimensions, but it’s really more like 1.5 because there is a heavy directionality bias that’s like a waterfall, down and across. I cannot copy pictures or diagrams into a text document. I cannot draw arrows between comments to the relevant code; I have to embed the comment within the code because of this dimensionality/directionality constraint. I cannot “touch” a variable (wire) while the program is running to inspect its value.

— bmitc

It’s true that many visual environments give up this linearity and allow more general positioning in 2D space (arbitrary placing of boxes and arrows in node-based programming, for example, or the 2D grids in spreadsheets). This has benefits and costs.

On the costs side, linear structures are a good match to the sequential execution of program instructions. They’re also easy to navigate and search through, top to bottom, without getting lost in branching confusion. Developing tools like autoformatters is more straightforward (we saw this come up in the earlier section on missing tooling).

On the benefits side, 2D structures give you more of an expressive canvas for communicating the meaning of your program: grouping similar items together, for example, or using shapes to distinguish between types of object.

In LabVIEW, not only do I have a 2D surface for drawing my program, I also get another 2D surface to create user interfaces for any function if I need. In text-languages, you only have colors and syntax to distinguish datatypes. In LabVIEW, you also have shape. These are all additional dimensions of information.

— bmitc

They can also help in remembering where things are:

One of the interesting things I found was that the 2-dimensional layout helped a lot in remembering where stuff was: this was especially useful in larger programs.

— dpwm

And the match to sequential execution is less important if your target domain is also non-sequential in some way:

If the program is completely non-sequential, visual tools which reflects the structure of the program are going to be much better than text. For example, if you are designing a electronic circuit, you draw a circuit diagram. Describing a electronic circuit purely in text is not going to be very helpful.

— nacc

Small discrete set of symbols

Written text IS a visual medium. It works because there is a finite alphabet of characters that can be combined into millions of words. Any other “visual” language needs a similar structure of primitives to be unambiguously interpreted.

— c2the3rd

This is a particularly important point that was brought up by several commenters in different threads. Text is built up from a small number of distinguishable characters. Text-based programming languages add even more structure, restricting to a constrained set of keywords that can only be combined in predefined ways. This removes ambiguity in what the program is supposed to do. The computer is much stupider than a human and ultimately needs everything to be completely specified as a sequence of discrete primitive actions.

At the opposite end of the spectrum is, say, an oil painting, which is also a visual medium but much more of an unconstrained, freeform one, where brushstrokes can swirl in any arbitrary pattern. This freedom is useful in artistic fields, where rich ambiguous associative meaning is the whole point, but becomes a nuisance in technical contexts. So different parts of the spectrum are used for different things:

Because each method has its pros and cons. It’s a difference of generality and specificity.

Consider this list as a ranking: 0 and 1 >> alphabet >> Chinese >> picture.

All 4 methods can be useful in some cases. Chinese has tens of thousands of characters, some people consider the language close to pictures, but real pictures have more than that (infinite variants).

Chinese is harder to parse than alphabet, and picture is harder than Chinese. (Imagine a compiler than can understand arbitrary picture!)

— c_shu

Visual programs are still generally closer to the text-based program end of the spectrum than the oil painting one. In a node-based programming language, for example, there might be a finite set of types of boxes, and defined rules on how to connect them up. There may be somewhat more freedom than normal text, with the ability to place boxes anywhere on a 2D canvas, but it’s still a long way from being able to slap any old brushstroke down. One commenter compared this to diagrammatic notation in category theory:

Category theorists deliberately use only a tiny, restricted set of the possibilities of drawing diagrams. If you try to get a visual artist or designer interested in the diagrams in a category theory book, they are almost certain to tell you that nothing “visual” worth mentioning is happening in those figures.

Visual culture is distinguished by its richness on expressive dimensions that text and category theory diagrams just don’t have.

— theoh

Drag-n-drop editors are a bit further towards the freeform end of the spectrum, allowing UI elements to be resized continuously to arbitrary sizes. But there are still constraints — maybe your widgets have to be rectangles, for example, rather than any old hand-drawn shape. And, as discussed in earlier sections, there’s a tension between visual specificity and dynamic programming of many potential visual states at once. Drag-n-drop editors arguably lose a lot of the features of ‘true’ languages by giving up structure, and more programmatic elements are likely to still use a constrained set of primitives.

Finally, there was an insightful comment questioning how successful these constrained visual languages are compared to text:

I am not aware of a constrained pictorial formalism that is both general and expressive enough to do the job of a programming language (directed graphs may be general enough, but are not expressive enough; when extended to fix this, they lose the generality.)

… There are some hybrids that are pretty useful in their areas of applicability, such as state transition networks, dataflow models and Petri nets (note that these three examples are all annotated directed graphs.)

This could be a whole blog post topic in itself, and I may return to it in a follow-up post — Dutilh Novaes makes similar points in her discussion of tractability vs expressiveness in formal logic. Too much to go into here, but I do think this is important.

Grab bag of other interesting points

This section is exactly what it says — interesting points that didn’t fit into any of the categories above.

Allowing syntax errors

This is a surprising one I wouldn’t have thought of, but it came up several times and makes a lot of sense on reflection. A lot of visual programming tools are too good at preventing syntax errors. Temporary errors can actually be really useful for refactoring:

This is also one of the beauties of text programming. It allows temporary syntax errors while restructuring things.

I’ve used many visual tools where every block you laid out had to be properly connected, so in order to refactor it you had to make dummy blocks as input and output and all other kinds of crap. Adding or removing arguments and return values of functions/blocks is guaranteed to give you rsi from excessive mousing.

— Too

I don’t quite understand why this is so common in visual tools specifically, but it may be to do with the underlying representation? One comment pointed out that this was a more general problem with any kind of language based on an abstract syntax tree that has to be correct at every point:

For my money, the reason for this is that a human editing code needs to write something invalid – on your way from Valid Program A to Valid Program B, you will temporarily write Invalid Jumble Of Bytes X. If your editor tries to prevent you writing invalid jumbles of bytes, you will be fighting it constantly.

The only languages with widely-used AST-based editing is the Lisp family (with paredit). They get away with this because:

- Lisp ‘syntax’ is so low-level that it doesn’t constrain your (invalid) intermediate states much. (ie you can still write a (let) or (cond) with the wrong number of arguments while you’re thinking).

- Paredit modes always have an “escape hatch” for editing text directly (eg you can usually highlight and delete an unbalanced parenthesis). You don’t need it often (see #1) – but when you need it, you really need it.

— meredydd

Maybe this is more common as a way to build a visual language?

Hybrids

Take what we all see at the end of whiteboard sessions. We see diagrams composed of text and icons that represent a broad swath of conceptual meaning. There is no reason why we can’t work in the same way with programming languages and computer.

— bmitc

Another recurring theme was a wish for hybrid tools that combined the good parts of visual and text-based tools. One example that came up in the ‘information density’ section was doing maths in a textual format in an otherwise visual tool, which seems to work quite well:

UE4 Blueprints are visual programming, and are done very well. For a lot of things they work are excellent. Everything has a very fine structure to it, you can drag off pins and get context aware options, etc. You can also have sub-functions that are their own graph, so it is cleanly separated. I really like them, and use them for a lot of things.

The issue is that when you get into complex logic and number crunching, it quickly becomes unwieldy. It is much easier to represent logic or mathematics in a flat textual format, especially if you are working in something like K. A single keystroke contains much more information than having to click around on options, create blocks, and connect the blocks. Even in a well-designed interface.

Tools have specific purposes and strengths. Use the right tool for the right job. Some kind of hybrid approach works in a lot of use cases. Sometimes visual scripting is great as an embedded DSL; and sometimes you just need all of the great benefits of high-bandwidth keyboard text entry.

Even current text-based environments have some hybrid aspect, as most IDEs support syntax highlighting, autocompletion, code folding etc to get some of the advantages of visualisation.

Visualising the wrong thing

The last comment I’ll quote is sort of ranty but makes a deep point. Most current visual tools only visualise the kind of things (control flow, types) that are already displayed on the screen in a text-based language. It’s a different representation of fundamentally the same thing. But the visualisations we actually want may be very different, and more to do with what the program does than what it looks like on the screen.

‘Visual Programming’ failed (and continues to fail) simply because it is a lie; just because you surround my textual code with boxes and draw arrows showing the ‘flow of execution’ does not make it visual! This core misunderstanding is why all these ‘visual’ tools suck and don’t help anyone do anything practical (read: practical = complex systems).

When I write code, for example a layout algorithm for a set of gui elements, I visually see the data in my head (the gui elements), then I run the algorithm and see the elements ‘move’ into position dependent upon their dock/anchor/margin properties (also taking into account previously docked elements positions, parent element resize delta, etc). This is the visual I need to see on screen! I need to see my real data being manipulated by my algorithms and moving from A to B. I expect with this kind of animation I could easily see when things go wrong naturally, seeing as visual processing happens with no conscious effort.

Instead visual programming thinks I want to see the textual properties of my objects in memory in fancy coloured boxes, which is not the case at all.

— hacker_9

I’m not going to try and comment seriously on this, as there’s almost too much to say — it points toward to a large number of potential tools and visual paradigms, many of which are speculative or experimental. But it’s useful to end here, as a reminder that the scope of visual programming is not just some boxes with arrows with between.

Final thoughts

This post is long enough already, so I’ll keep this short. I collected all these quotes as a sort of exploratory project with no very clear aim in mind, and I’m not yet sure what I’m going to do with it. I probably want to write at least one follow-up post making links back to the Dutilh Novaes and Ong books on text as a technology. Other than that, here are a few early ideas that came to mind as I wrote it:

How much is ‘visual programming’ a natural category? I quickly discovered that commmenters had very different ideas of what ‘visual programming’ meant. Some of these are at least partially in tension with each other. For example, drag-n-drop UI editors often allow near-arbitrary placement of UI elements on the screen, using an intuitive visual interface, but are not necessarily very programmatic. On the other hand, node-based editors allow complicated dynamic logic, but are less ‘visual’, reproducing a lot of the conventions of standard text-based programming. Is there a finer-grained classification that would be more useful than the generic ‘visual programming’ label?

Meaning vs fluency. One of the most appealing features of visual tools is that they can make certain inherently visual actions much more intuitive (a colour picker is a very simple example of this). And proponents of visual programming are often motivated by making programming more understandable. At the same time, a language needs to be a fluent medium for writing code quickly. At the fluent stage, it’s common to ignore the semantic meaning of what you’re doing, and rely on unthinkingly executing known patterns of symbol manipulation instead. Desigining for transparent meaning vs designing for fluency are not the same thing — Vim is a great example of a tool that is incomprehensible to beginners but excellent for fluent text manipulation. It could be interesting to explore the tension between them.

‘Missing tooling’ deep dives. I’m not personally all that interested in following this up, it takes me some way from the ‘text as technology’ angle I came in from, but it seems like an obvious one to mention. The ‘missing tooling’ subsections of this post could all be dug into in far more depth. For each one, it would be valuable to compare many existing visual environments, and understand what’s already available and what the limitations are compared to normal text.

Is ‘folk wisdom from internet forums’ worth exploring as a genre of blog post? Finally, here’s a sort of meta question, about the form of the post rather than the content. There’s an extraordinary amount of hard-to-access knowledge locked up in forums like Hacker News. While writing this post I got distracted by a different rabbit hole about Delphi, which somehow led me to another one about Smalltalk, which… well, you know how it goes. I realised that there were many other posts in this genre that could be worth writing. Maybe there should be more of them?

If you have thoughts on these questions, or on anything else in the post, please leave them in the comments!

I’m replacing a Labview program with one written in Rust, so the topic is pretty interesting for me right now.

My current gripe with Labview is that it’s a unique programming artifact that’s further held back by being a licensed, proprietary product. While people are still making useful tooling around it, the number of people with the knowledge and interest to do so is pretty small, and you’re limited to working around Labview, rather than with it, since you can’t access the source. It just seems too like too much of a backwater in a lot of ways to really benefit from rising-tide developments in coding practice that are improving life working with other languages–even the version control story is still iffy! A lot of these visual programming tools probably suffer from a similar situation.

I was trying to imagine an alternative that would be more successful. I came up with a visual editor that compiles to some graph or hierarchy specification, and only recognizes modules or building blocks written in the same. Plugins would take that and generate code in a target language for a specific purpose, like making a state machine or signal processing pipeline. You would then go and program around these structures in text, where it’s more effective. A quick search showed that there is a huge cottage industry of open source/freeware/proprietary state machine code generators that do exactly this. A lot of these are one-offs, not extensible, etc., probably for good reason. But maybe there’s the right alignment of utility that bundles up all the niche end-users and lets everyone benefit. Canonicalizing a visual representation of a given graph could let you autoformat it without spending hours redoing all the wires by hand! Formal verification of the correctness of the generated code could be done. Maybe dozens of niche application areas using the same tool are enough to get these improvements that would never happen in their individual software tide pools? Once there was a visual programming paradigm that didn’t have so many dealbreakers, and wasn’t totally missing out on modern tooling conveniences, it would be a more apples-to-apples comparison with text.

Right now it seems like a lot of scientific instrumentation control is moving from Labview to Python, which is awful in its own way. But it’s progress insofar as Python’s problems are everyone’s problems.

LikeLiked by 1 person

I believe that diagrams are at their most useful when they are deployed as a communications tool. When discussing our software, we commonly draw a diagram to help illustrate how functionality is divided up among components, and to show which components are impacted by particular functional chains. They are a powerful tool for communicating and coordinating the work of multiple people or multiple teams. If our software does not provide us with diagrams itself, then we will commonly create them ourselves in our technical documentation or on whiteboards as an aide to collaboration.

However, as we descend in our system towards finer and more detailed levels of granularity, two things happen. First of all, fewer people are impacted by very ‘local’ design decisions, so the importance of communication diminishes. Secondly, the frequency of change increases, so the importance of mutability and ease-of-editing increases.

The requirements that we place on our engineering tools depend largely on what we are trying to do with them. Communicating and coordinating work across a large engineering organization places fundamentally different requirements on our engineering tools than does, say, working alone to solve a challenging problem that requires the creation of new concepts and abstractions.

There are many different ways to work with software, and although each share much of the same flavor (dealing with informational complexity), the nature of these challenges is often distinct enough that the different requirements may lead to vastly different solutions.

For my part, I have a lot of faith in the general utility of tools which allow for the easy generation of diagrammatic representations of high-level structure, but which naturally decay to a more conventional textual representation at finer levels of granularity.

LikeLike

A great read. Thanks!

In the section “Final thoughts”, subsection “How much is ‘visual programming’ a natural category?”, I think you may have accidentally swapped the descriptions for the concepts “drag-n-drop UI editors” and “node-based editors”.

LikeLike

I both really dislike visual programming (dragging boxes around is ob so tedious) and really like it for tasks that it naturally fits (picking a colour, understanding a state machine). Very informative to see you sort and categorize its different aspects.

On your last point, I find this kind of folk wisdom collection and summarisation highly valuable but rarely seen. Please do more!

LikeLike

Allowing syntax errors; Hazelnut is a structure editor that addresses exactly this. “Live Functional Programming with Typed Holes”

LikeLiked by 1 person

Great post, very interesting!

Even though it is not visual programming in the sense you are writing about, this post made me think of the Piet programming language: https://www.dangermouse.net/esoteric/piet/samples.html

Have you heard about it? Programs in Piet look like abstract paintings in the style of Piet Mondrian. Not practical, but clever and fun!

LikeLiked by 1 person

Sad my favourite visual programming system DRAKON did not made the cut. It has interesting backstory from Russian space program.

LikeLike

There were a couple of references to DRAKON in the threads I summarised, but it never came up as a main topic of conversation. I spent a (very) few minutes looking it up and it does look interesting!

LikeLike

An interesting aspect of discussions about visual programming I find is that in any given discussion, there always seems to be a contingent of people who staunchly declare that visual programming has utterly failed in every way, and that we should never use it for anything. From my perspective, as a Houdini and Nuke user in VFX, it has been used in the making of nearly every major film and TV commercial for the past 20 years or so (these are projects that meet deadlines and are financially viable).

But if this is the case, why such bias and blindness to it? One issue is that, it seems that to a significant number of programmers, programming is *about writing code*, as opposed to programming being an activity that serves some purpose, like assisting in addressing an engineering challenge, or a potentially useful component that might or might not be helpful in assisting people to do their jobs (if a domain is rapidly changing, and involves medium-sized tasks, then doing things by hand is a lot of times faster than trying to write and debug flexible programs). My shorthand is that, from this perspective, if you use units in your work (kg, feet, dollars), you’re tying yourself to physical constraints, which don’t exist to programmers, and there’s a good chance that what you do will be viewed as “domain-specific” and not considered a proper part of programming.

Some part of this attitude seems to arise from the fact that there’s an asymmetry in how people think through problems: for someone with a strongly visual way of working, collapsing dimensionality to 1D linear thinking is possible, if somewhat painful; for someone accustomed to engaging solely in 1D linear thinking, anything beyond that simply doesn’t exist. Communicating visual ideas to someone with that mindset feels very much like talking to a blind person who needs rules to follow to navigate through a room, since they can’t just look around and walk over and do something.

Another issue that I suspect comes up when people first experience a visual tool, is that raising the dimensionality immediately exposes problems, in the way that turning the lights on might expose your messy room, where you’ve been bumping around in the dark. A significant aspect of visual tools that are so prevalent in VFX pipelines is that they can be used to communicate *intent* and *purpose* to non-expert users: move this chunk to the side to communicate that they don’t have to worry about this right now, color these nodes related shades so that someone can step through what they need to do without knowing all the details, hide this for artists working on shots, expose it for those working on tools. Trying to work only with “commented out” capability rather than “move to the side or just make it smaller for now” capability would mean missing deadlines. If a file *looks messy*, it might indicate that someone is working on it, and methods have not yet stabilized. *Messiness* is an important communicative aspect of understanding what you’re doing, and “de-messifying” (untangling something that you not only know is messy, but that any non-expert could examine and *see* is messy) is an incremental means of gaining understanding. This incremental part is kind of missing from a code representation, where it is only through sitting with something for long enough, and having enough expertise in the area would someone be able to even tell that something is “a stinking mess,” or “clean code,” or somewhere in between.

Programmers being isolated and segregated from the users of whatever it is that they’re making and working for “tech companies” has led to a point where it seems reasonable to have books like “C++ for 5 year olds!” introducing nullish coalescing operators in Chapter 1, or things along this line. I think a significant part of introducing “visual tools” of any sort, would simply be introducing the notion that physical constraints are what matter to most people, since we live in the world where we have to deal with them; the various visual paradigms are simply introducing physical constraints of various types in ways that make sense to people who know about and have experience with them. This is, indeed, “constraining programming” to be a less free-from activity; this is exactly what is needed and what we want.

LikeLike

Interesting point about communicating intent to non-experts by being able to physically move things out of the way.

Outside of programming, I really appreciate this ability in Inkscape, where I can have a bunch of things ‘off to the side’ of the frame that I can bring in as I need. Definitely a richer experience than toggling comments!

LikeLike

To clarify, by “non-expert” I don’t necessarily mean people who are not knowledgable about programming or whatever the case may be; I mean people who aren’t involved *in this particular part of the project*, which of course would mean lots of very “expert” people, who just haven’t been working on this. In the programmer sense, a “non-expert” would also mean, yourself after you’ve been off the project and forgotten how everything is set up. Arrows pointing to things to explain what’s going on, things pulled off to the side bc they’re not relevant for now, color-coded chunks, things demarcated by who was working on them, somehow this communicative aspect is derided by a certain set of programmers as “for beginners” or “for know-nothing plebians who will eventually grow out of it”, rather than as a vital part of large-scale projects with deadlines.

Another thing I forgot to mention is that dataflow programming is isomorphic to Lisp; one is constructing pure computations on the primitives of a domain (which in this case are images or meshes rather than lists). Somehow this often seems to go unrecognized in discussions, which seem to take visual paradigms as intrinsically “other.” Under the hood, dataflow graphs are XML documents, which are Lisp programs in disguise; the fact that we have a useful representation to the user as a graph of nodes doesn’t change the fact that we’re accomplishing the exact same thing that could be done in text-based format, but instead using a representation that leverages the enormous spatial and visual processing capabilities that humans have, so that they can accomplish their work given the constraints that these types of projects impose.

I think a useful means of comparing text to visual programming (by virtue of it being a constrained domain) is to examine shader programming (ex. GLSL programs) vs working with shading graphs. The salient point is that, the method of programming shaders factors out or discourages use of structures which are unhelpful in this context (no while loops, discourage branching). But this leads to a style of programming that make those structures less unnecessary (filtering rather than branching). In initial reaction might be that *so much is not possible now*, but a more mature view is that, given duality, there’s nothing that’s not possible, you just have to rethink how you go about approaching your problem.

LikeLike

What David MacIver called “ghost knowledge” (mentioned above) is similar to what is more commonly called “informal knowledge” in the research literature. I just searched the web for “ghost knowledge” + “informal knowledge” and there are apparently no sources that use both terms. Another term that just came to mind is “invisible knowledge” (the same analogy as ghost knowledge, of course, since ghosts are invisible), and a web search finds some sources that use both terms “invisible knowledge” + “informal knowledge”. There may be other terms like this.

As others have commented above, the kind of synthesis of informal knowledge that this blog post does can be really valuable.

A link to this post was included in the O’Reilly Programming Newsletter yesterday, so if you have a sudden spike in page views, that may be the source.

LikeLiked by 1 person

I’ve also meant to look up ‘ghost knowledge’ and what similar terms are already in use. David MacIver’s usage comes from a paper about ‘ghost theorems’, but I think the expansion to knowledge in general is original to him afaik. I’ve seen a lot of people pick up on it, but it would be useful to know what terminology already exists.

Also thanks for letting me know where all the traffic is coming from! I could see it was from the oreilly.com domain but couldn’t see any obvious source, so I was guessing a newsletter or something – good to have it confirmed.

LikeLike

Useful summary, thanks. I referenced this page in my article:

‘Visual vs text based programming, which is better?’

LikeLiked by 1 person