[Written as part of Notebook Blog Month.]

Everybody hates neoliberalism, it’s the law. But what is it?

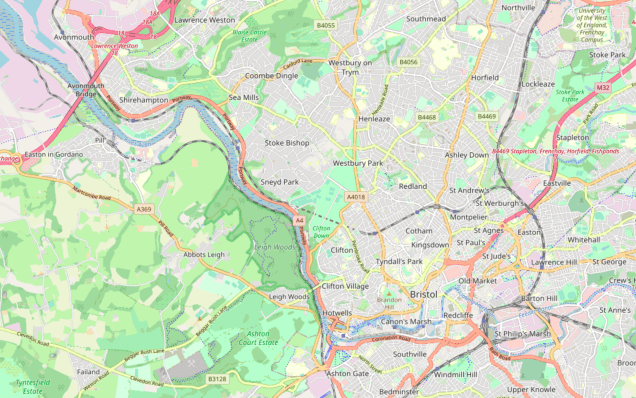

This is probably the topic I’m most ignorant about and ill-prepared-for on the whole list, and I wasn’t going to do it. But it’s good prep for the bullshit jobs post, which was a popular choice, so I’m going to try. I’m going to be trying to articulate my current thoughts, rather than attempting to say anything original. And also I’m not really talking about neoliberalism as a coherent ideology or movement. (I think I’d have to do another speedrun just to have a chance of saying something sensible.) More like “neoliberalism”, scarequoted, as a sort of diffuse cloud of associations that the term brings to mind. Here’s my cloud (very UK-centric):

- Big amorphous companies with bland generic names like Serco or Interserve, providing an incoherent mix of services to the public sector, with no obvious specialism beyond winning government contracts

- Public private partnerships

- Metrics! Lots of metrics!

- Incuriosity about specifics. E.g. management by pushing to make a number go up, rather than any deep engagement with the particulars of the specific problem

- Food got really good over this period. I think this actually might be relevant and not just something that happened at the same time

- Low cost short-haul airlines becoming a big thing (in Europe anyway – don’t really understand how widespread this is)

- Thinking you’re on a public right of way but actually it’s a private street owned by some shopping centre or w/e. With private security and lots of CCTV

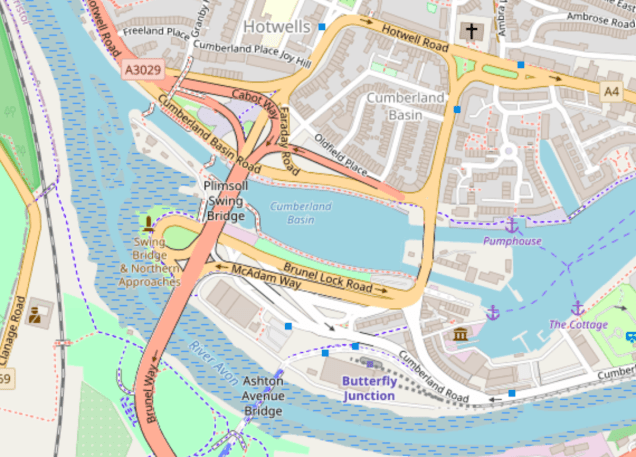

- Post-industrial harbourside developments with old warehouses converted into a Giraffe and a Slug and Lettuce

- A caricatured version of Tony Blair’s disembodied head is floating over the top of this whole scene like a barrage balloon. I don’t think this is important but I thought you’d like to know

I’ve had this topic vaguely in mind since I read a blog post by Timothy Burke, a professor of modern history, a while back. The post itself has a standard offhand ‘boo neoliberalism’ side remark, but then when challenged in the comments he backs it up with an excellent, insightful sketch of what he means. (Maybe this post should just have been a copy of this comment, instead of my ramblings.)

I’m sensitive to the complaint that “neoliberalism” is a buzz word that can mean almost everything (usually something the speaker disapproves of).

A full fleshing out is more than I can provide, though. But here’s some sketches of what I have in mind:

1) The Reagan-Thatcher assault on “government” and aligned conceptions of “the public”–these were not merely attempts to produce new efficiencies in government, but a broad, sustained philosophical rejection of the idea that government can be a major way to align values and outcomes, to tackle social problems, to restrain or dampen the power of the market to damage existing communities. “The public” is not the same, but it was an additional target: the notion that citizens have shared or collective responsibilities, that there are resources and domains which should not be owned privately but instead open to and shared by all, etc. That’s led to a conception of citizenship or social identity that is entirely individualized, privatized, self-centered, self-affirming, and which accepts no responsibility to shared truths, facts, or mechanisms of dispute and deliberation.

2) The idea of comprehensively measuring, assessing, quantifying performance in numerous domains; insisting that values which cannot be measured or quantified are of no worth or usefulness; and constantly demanding incremental improvements from all individuals and organizations within these created metrics. This really began to take off in the 1990s and is now widespread through numerous private and public institutions.

3) The simultaneous stripping bare of ordinary people to numerous systems of surveillance, measurement, disclosure, monitoring, maintenance (by both the state and private entities) while building more and more barriers to transparency protecting the powerful and their most important private and public activities. I think especially notable since the late 1990s and the rise of digital culture. A loss of workplace and civil protections for most people (especially through de-unionization) at the same time that the powerful have become increasingly untouchable and unaccountable for a variety of reasons.

4) Nearly unrestrained global mobility for capital coupled with strong restrictions on labor (both in terms of mobility and in terms of protection). Dramatically increased income inequality. Massive “shadow economies” involving illegal or unsanctioned but nevertheless highly structured movements of money, people, and commodities. Really became visible by the early 1990s.

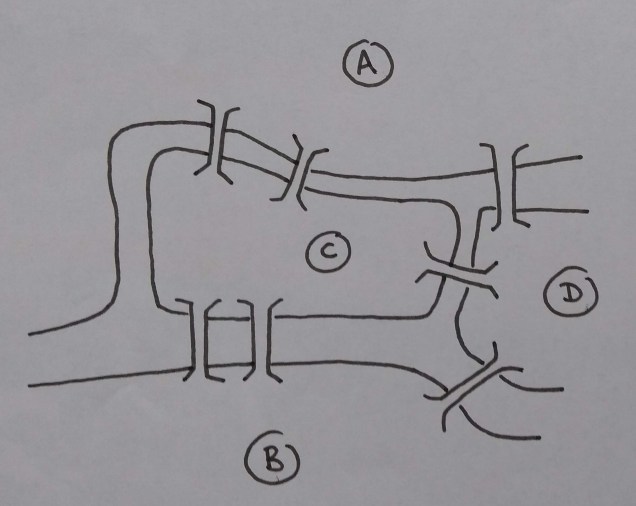

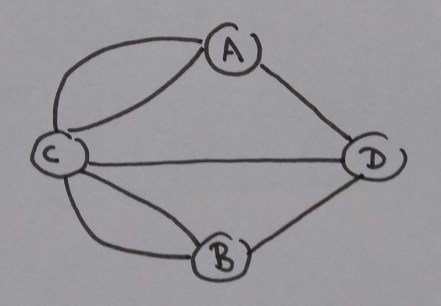

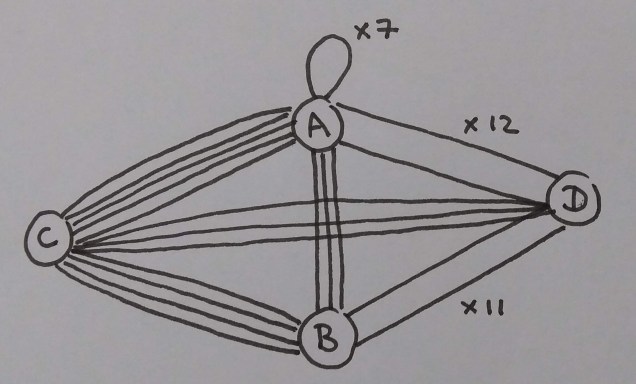

A lot of the features in my association cloud match pretty well: metrics, surveillance, privatisation. Didn’t really pick up much from point 4. I think 2 is the one which interests me most. My read on the metric stuff is that there’s a genuinely useful tool here that really does work within its domain of application but is disastrous when applied widely to everything. The tool goes something like:

- let go of a need for top-down control

- fragment the system into lots of little bits, connected over an interface of numbers (money, performance metrics, whatever)

- try to improve the system by hammering on the little bits in ways such that the numbers go in the direction you want. This could be through market forces, or through metrics-driven performance improvements.

If your problem is amenable to this kind of breakdown, I think it actually works pretty well. This is why I think ‘food got good’ is actually relevant and not a coincidence. It fits this playbook quite nicely:

- It’s a known problem. People have been selling food for a long time and have some well-tested ideas about how to cook, prep, order supplies, etc. Theres’s innovation on top of that, but it’s not some esoteric new research field.

- Each individual purchase (of a meal, cake, w/e) is small and low-value. So the domain is naturally fragmented into lots of tiny bits.

- This also means that lots of people can afford to be customers, increasing the number of tiny bits

- Fast feedback. People know whether they like a croissant after minutes, not years.

- Relevant feedback. People just tell you whether they like your croissants, which is the thing you care about. You don’t need to go search for some convoluted proxy measure of whether they like your croissants.

- Lowish barriers to entry. Not especially capital-intensive to start a cafe or market stall compared with most businesses.

- Lowish regulations. There’s rules for food safety, but it’s not like building planes or someting.

- No lock-in for customers. You can go to the donburi stall today and the pie and mash stall tomorrow.

- All of this means that the interface layer of numbers can be an actual market, rather than some faked-up internal market of metrics to optimise. And it’s a pretty open market that most people can access in some form. People don’t go out and buy trains, but they do go out and buy sandwiches.

There’s another very important, less wonky factor that breaks you out of the dry break-it-into-numbers method I listed above. You ‘get to cheat’ by bringing in emotional energy that ‘comes along for free’. People actually like food! They start cafes because they want to, even when it’s a terrible business idea. They already intrinsically give a shit about the problem, and markets are a thin interface layer over the top rather than most of the thing. This isn’t going to carry over to, say, airport security or detergent manufacturing.

As you get further away from an idealised row of spherical burger vans things get more complicated and ambiguous. Low cost airlines are a good example. These actually did a good job of fragmenting the domain into lots of bits that were lumped together by the older incumbents. And it’s worked pretty well, by bringing down prices to the point where far more people can afford to travel. (Of course there’s also the climate change considerations. If you ignore those it seems like a very obvious Good Thing, once you include them it’s somewhat murkier I suppose.)

The price you pay is that the experience gets subtly degraded at many points by the optimisation, and in aggregate these tend to produce a very unsubtle crappiness. For a start there’s the simple overhead of buying the fragmented bits separately. You have to click through many screens of a clunky web application and decide individually about whether you want food, whether you want to choose your own seat, whether you want priority queuing, etc. All the things you’d just have got as default on the old, expensive package deal. You also have to say no to the annoying ads trying to upsell you on various deals on hotels, car rentals and travel insurance.

Then there are the all the ways the flight itself becomes crappier. It’s at a crap airport a long way from the city you want to get to, with crappy transport links. The flight is a cheap slot at some crappy time of the early morning. The plane is old and crappily fitted out. You’re having a crappy time lugging around the absolute maximum amount of hand luggage possible to avoid the extra hold luggage fee. (You’ve got pretty good at optimising numbers yourself.)

This is often still worth it, but can easily tip into just being plain Too Crappy. I’ve definitely over-optimised flight booking for cheapness and regretted it (normally when my alarm goes off at three in the morning).

Low cost airlines seem basically like a good idea, on balance. But then there are the true disasters, the domains that have none of the natural features that the neoliberal playbook works on. A good example is early-stage, exploratory academic research. I’ve spent too long on this post already. You can fill in the depressing details yourself.